It’s not the first time we’ve talked about Mailchimp’s A/B Testing, however, they have just updated the functionality to make it more intuitive and powerful, and we couldn’t miss the opportunity to show it to you. Used properly, this tool will allow us to optimize our newsletter campaigns to improve our open and click-through rates constantly.

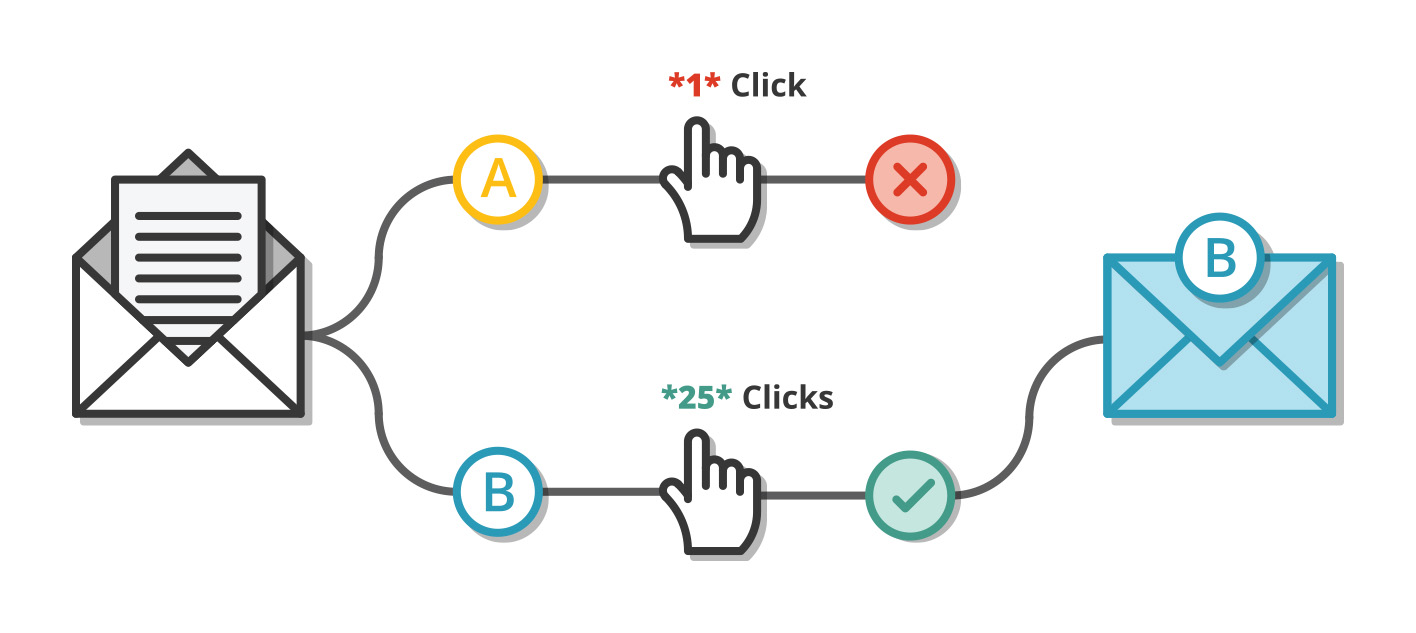

What is an A/B test in e-mail marketing?

The A/B Test consists of sending two different mailings (A and B) of the same campaign to a part of our mailing list, in order to detect which of them works better, and thus be able to send to the rest of the list the one that has proved to be more effective.

The variables

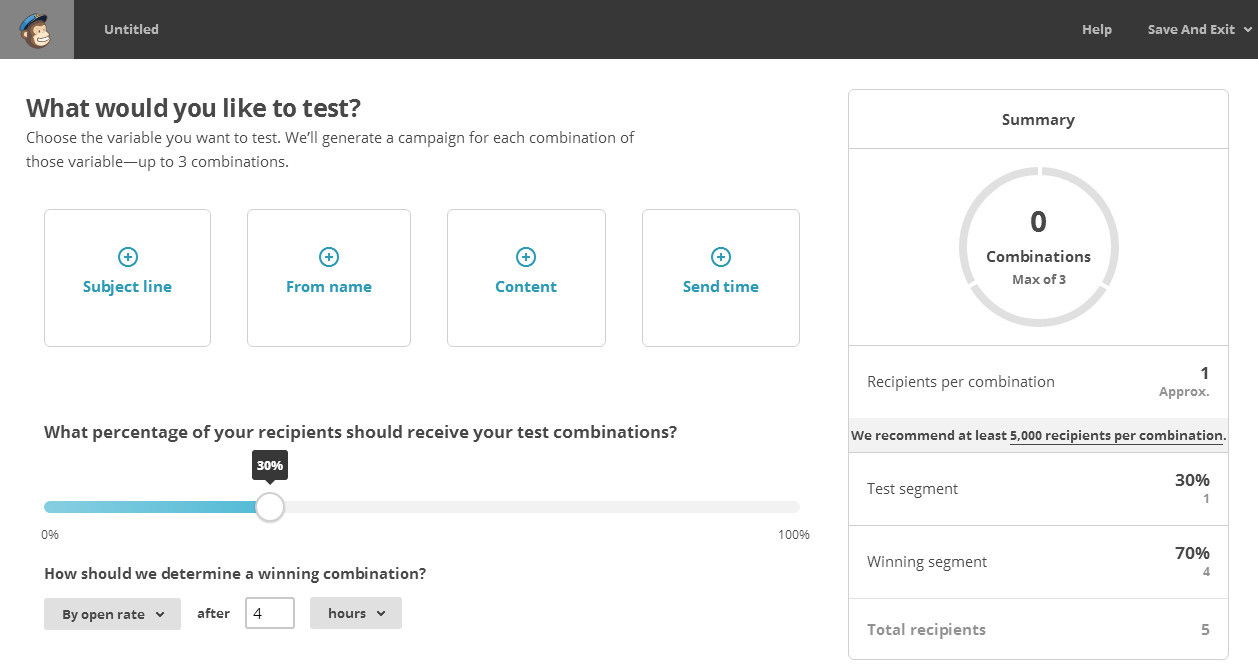

Mailchimp offers us within the A/B Test different elements of a newsletter campaign that we can test:

- Subject of the message: two identical emails will be sent but with different subjects. It is useful to test, for example, whether a professional or a more friendly tone is more appropriate.

- Sender: just as the subject influences the user’s decision to open or not open the email, the person who sent it is also important. In this section we can choose two different email addresses to test which one works best.

- Content: perhaps the most important variable. To use it, you will need to prepare two templates for the same campaign, and enter the differentiating elements that you consider appropriate. In this way Mailchimp will send the two variations to the same mailing list and select the one that worked best. It is useful, for example, to know whether a series of links should include a photo or just text. If you have to add company information, or just the news. Perhaps a different font, larger, etc.

- Sending time: depending on the content and recipients, it is important to take into account the sending time. An A/B test is perfect to know exactly what time slot is most appropriate.

Test sample

After selecting the variable or variables that we are going to use, it is necessary to choose what percentage of our mailing list we are going to use to carry out the test. The most suitable percentage will of course depend on the number of recipients in the entire campaign. Mailchimp recommends 20%, which is adequate if our database has at least 5000 users. In this case the sample would be 1000 users of which 500 would receive the A and 500 the B. The winning mailing would be sent to the remaining 4000 users. However, if our database has only 500 users, for example, it would be more convenient to increase the split to 30% or 40%, since the smaller the sample, the less representative it will be, and the conclusions we will draw from the test will therefore not be as reliable.

The key variable in deciding the winner

Once the split has been configured, it is time to define which criteria we will consider to give as a winner one mailing over another. There are three default modes to choose from: click-through rate, open rate and revenue level. Depending on what we want to test, we will use the open rate or the click-through rate. For example, if we are testing the Subject or the Sender, the most commonly used is the open rate. This is a logical choice since the first hurdle that a successful email marketing campaign has to overcome is that the majority of recipients open the email. However, here we must carefully analyze what type of audience we are targeting and what our objective is. If, on the other hand, we are testing the Content, then the criterion to use in general is usually the click-through rate.

It is also necessary to specify the margin to be given to the campaign for the test, which can range from a few hours to days. Ideally, the test and the final mailing should be carried out in the shortest possible time, so that the time factor (opening the mailing on a weekend is not the same as opening it on a working day) does not affect the results. In any case, there is a Manual selection option that will allow us to choose which variable we will use as the winning combination after having sent the campaign to the initial sample. It is especially useful when the list of users is too heterogeneous or we simply do not have a clear idea of which is the best option.

Once the test has been defined, all you have to do is set up the different campaigns. Depending on the variable to be tested, the following screens will be different:

- Message subject: In the “Setup” screen, two different subject possibilities will be displayed.

- Sender: In the “Setup” screen, two different sender possibilities will be displayed.

- Content: There will be a single “Setup” screen, but it will be in “Design” where there will be two design options to be tested.

- Time of shipment: We will configure different days and times for shipment in the “Setup” screen.

Practical example

In our case we have carried out an A/B Test in order to know what works best:

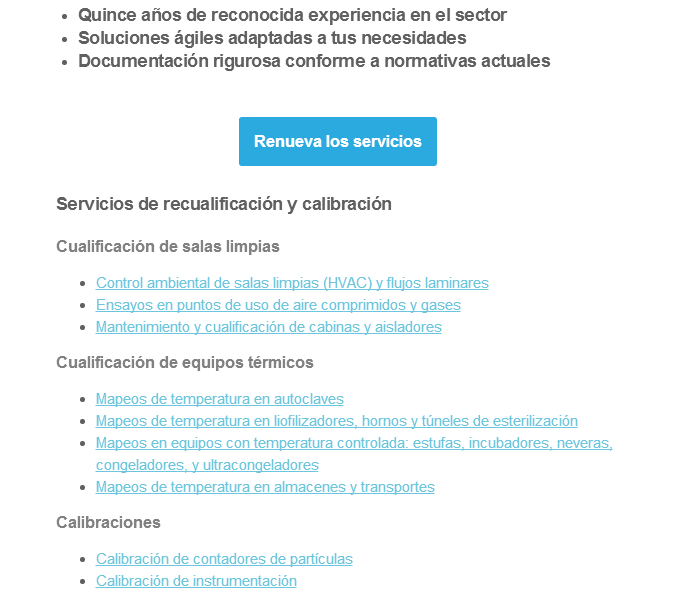

- List of text-only links

- List of links with text + image

To find out, we select the variable open rate and create two templates for the test.

In the first template we use only a list of ordered links, only text. They take up little space and can be read quickly. Access in principle is more comfortable since there is no need to scroll to see all the content.

The second template had the same links as the first one but with the exception that an image was added as a resource to make the message more attractive and pleasant. The disadvantage was that the newsletter increased in size considerably and the user had to scroll to access all the content.

The result, following the click-through rate variable, was more favorable to the template with images, so we conclude that due to the type of content and the type of user to whom this type of campaign is addressed, it is advisable to add images to the mailing to improve its effectiveness.

Conclusions

Behind the use of A/B Testing lies a work philosophy that we at Agencia Reinicia try to instill in our clients and that we believe will lead to more efficient organizations in the medium and long term. It is about conceiving marketing as a process of constant improvement instead of sequential and independent actions, in which the results are permanently analyzed and each of the elements of the campaigns are tested in order to increase results.

An A/B test is extremely useful if used well. The conclusions that can be drawn from a test are more relevant the more we have done previously. It is a matter of polishing campaigns little by little to reach a level of optimization that is difficult to surpass. You must be careful with the variables and above all with the monitoring of results. It is as important to have good information as it is to know how to interpret it, and in this case it is convenient to understand beforehand which factors are the most relevant.

Mailchimp provides us with a very effective and easy to use tool. Try it out and let us know how it works for you.